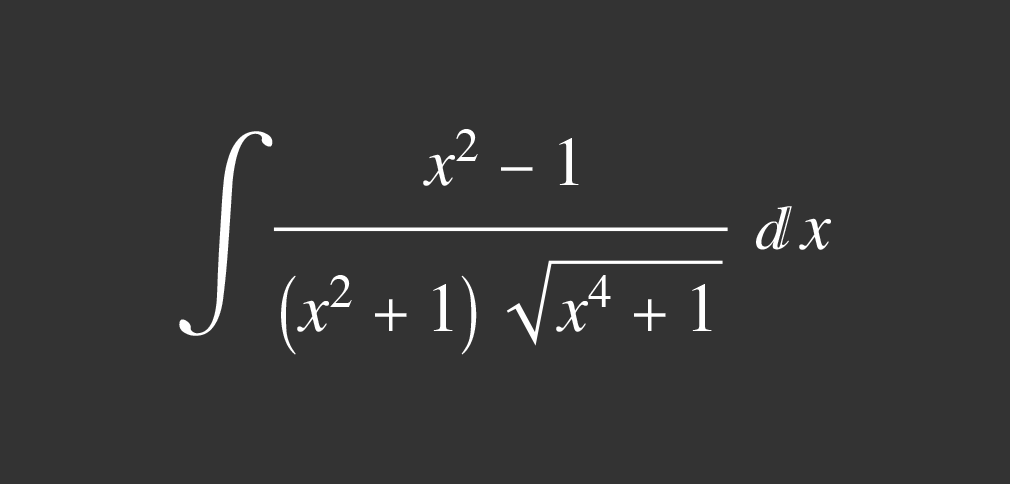

There are simple functions for which we cannot find antiderivatives in terms of the functions we know. Some examples are:

Another problem that arises in calculus is calculating the values of functions. For a given polynomial like $3x^2 + 7x + 1$, it’s simple to calculate the value of the function for various values of $x$, but it’s not so simple for a function like $\sin(x)$. To calculate the value of the function at some value of $x$, we would have to construct a right triangle containing the desired angle $x$ and then measure the opposite side and the hypotenuse. However, this process is not very accurate if $x$ is, for example, 30°50'47".

The answer to the problems above is to approximate unmanageable functions with manageable ones, while also precisely determining the incurred error. If we are to approximate a given function $f(x)$ by $g(x)$, we should make $g(x)$ relatively simple so that we can calculate its values. The simplest functions to work with are polynomials, and therefore we should approximate the function by polynomials.

First, let’s look into the simpler problem of approximating a function around one value of $x$. Let’s say that we have the function $f(x)$ and we want to approximate its value near $x = 0$. Let’s consider the polynomial $g(x)$ as an approximation to $f(x)$:

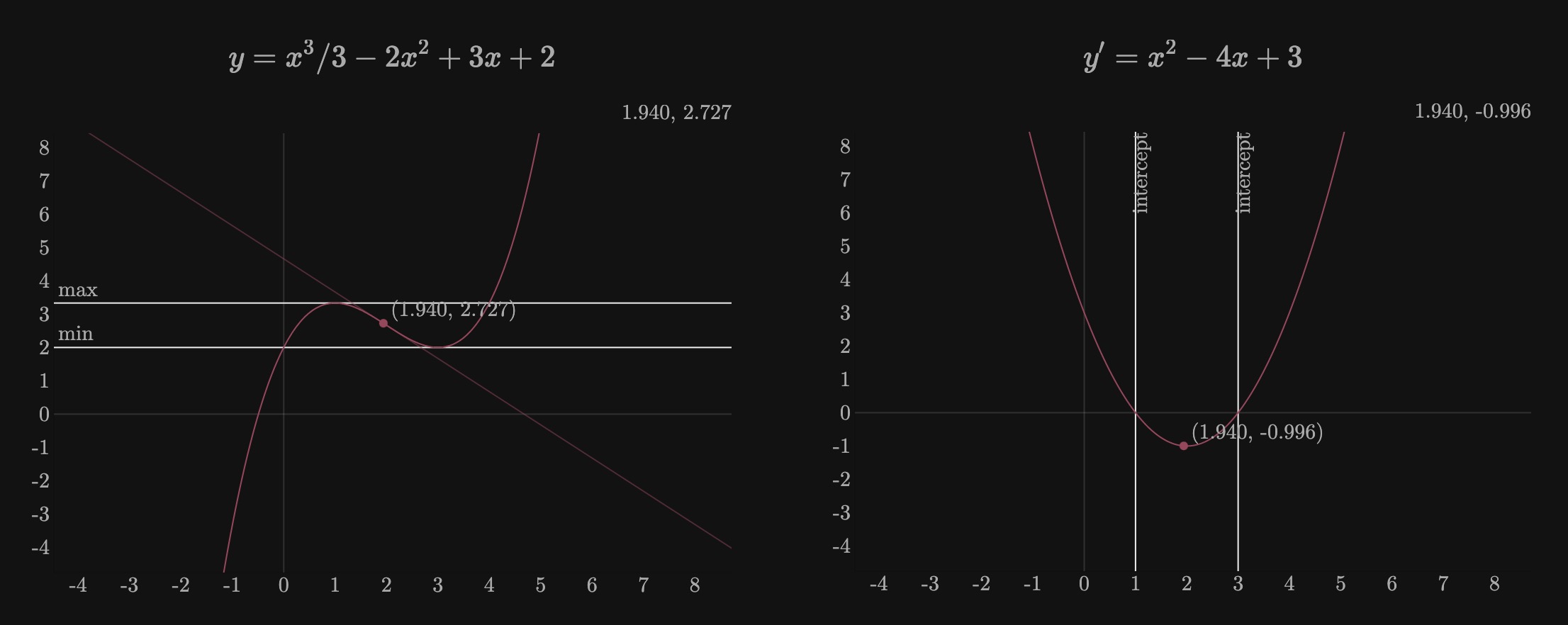

We can make $g(x)$ agree with $f(x)$ at $x = 0$ because, with $g(0) = c_0$, we can take $c_0$ to be $f(0)$. If we expect that $g(x)$ is an approximation of $f(x)$ at $x = 0$, we would also expect that the tangent line at $x = 0$ approximates the curve closely at the point of tangency. Hence, we should make the slope of $g(x)$ agree with the slope of $f(x)$ at $x = 0$. Applying a differentiation process to $g(x)$:

At $x = 0$, $g’(0) = c_1$. If $g’(0)$ agrees with $f’(0)$, then:

We can apply the same idea by making $g’’(x)$ agree with $f’’(x)$ at $x = 0$. Applying a differentiation process to $g’(x)$:

Then $g’’(0) = 2c_2$, and if $g’’(0)$ is the same as $f’’(0)$, then $f’’(0) = 2c_2$, or:

To determine $c_3$, we would make the third derivatives of both functions agree at $x = 0$:

Then $g’’’(0) = 2 \cdot 3c_3$, which is the same as $f’’’(0)$, then:

We can see that the $n$-th derivative of $g(x)$ is $g^{(n)}(x) = n(n - 1)(n - 2)\ldots$, and if $g^{(n)}(0)$ is equal to $f^{(n)}(0)$:

Because we used the condition that each pair of successive derivatives agree at $x = 0$, $g(x)$ takes the form:

We could equally make the approximation near any other value of $x$, e.g., $x = a$. Thus, the proper form of $g(x)$ which generalizes on the form \eqref{gx} is:

Then the final formula for approximating any function $f(x)$ by a polynomial $g(x)$ near $x = a$ is:

Taylor’s Theorem

\eqref{taylor} approximates the value of a function $f(x)$ at the point $a$; however, we do not know how good the approximation is numerically. At $x = a, g(a) = f(a)$, which is exact. However, for any $x$ near $a$, like $a + h, g(a + h) \approx f(a + h)$. The difference $f(a + h) - g(a + h)$ is the error in approximating $f(x)$ by the polynomial $g(x)$. The formula that approximates $f(x)$, considering also the error, was first given by Brook Taylor.

For any function $f(x)$ which has $(n + 1)$ derivatives in the interval from $a$ to $x$:

Where $\mu$ is between $x$ and $a$.